The rapid growth of artificial intelligence, data science, and high-performance computing has significantly increased the demand for powerful processing hardware. At the center of this transformation are NVIDIA GPUs and GPU servers, which enable organizations to process massive datasets, train complex AI models, and run compute-intensive applications efficiently.

From individual developers to enterprise data centers, GPU-based computing has become the foundation of modern workloads.

This guide explores NVIDIA GPUs, GPU server architecture, key components, and real-world use cases to help understand their role in today’s computing landscape.

What Is an NVIDIA GPU?

An NVIDIA GPU is a specialized processor designed to accelerate parallel computations. Unlike CPUs, which handle sequential tasks, GPUs are optimized for performing thousands of operations simultaneously.

NVIDIA GPUs are widely used in:

Modern AI frameworks such as PyTorch and TensorFlow rely heavily on NVIDIA’s CUDA architecture for accelerated computing.

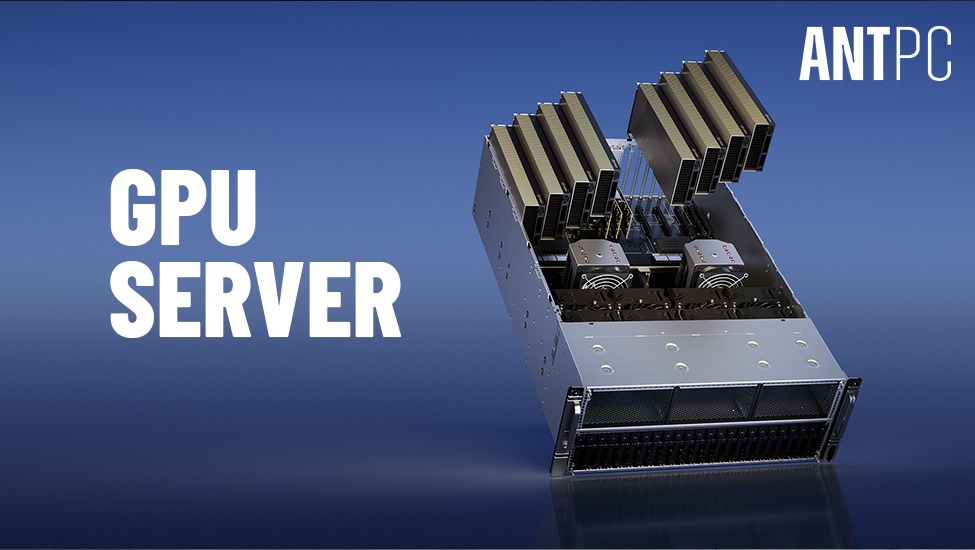

What Is a GPU Server?

A GPU server is a high-performance system that integrates multiple GPUs into a single platform to handle large-scale computational workloads.

Unlike traditional servers, GPU servers are specifically designed for:

These systems are commonly deployed in data centers and enterprise environments.

NVIDIA GPU vs CPU: Why GPUs Are Critical

The key advantage of GPUs lies in parallel processing.

|

Feature |

GPU |

CPU |

|

Core Count |

Thousands |

Few cores |

|

Processing Type |

Parallel |

Sequential |

|

Best For |

AI, rendering, HPC |

General tasks |

|

Performance |

High for compute workloads |

Limited for parallel tasks |

This makes GPUs significantly more efficient for tasks like neural network training and simulation.

Types of NVIDIA GPUs for Workstations and Servers

NVIDIA offers a wide range of GPUs tailored for different use cases.

Consumer GPUs

Best for:

Professional Workstation GPUs

Best for:

Data Center GPUs

Best for:

GPU Server Architecture Explained

A GPU server combines multiple high-performance components to deliver massive compute power.

Key Components of a GPU Server

1. Multiple GPUs

GPU servers typically include 4 to 8 GPUs, depending on workload requirements.

2. High-Core CPUs

Used to manage tasks and coordinate GPU workloads.

Common options:

3. High-Speed Interconnects

Technologies like NVLink enable fast communication between GPUs.

4. Large System Memory

GPU servers often include 128GB to 1TB+ RAM.

5. High-Performance Storage

NVMe SSD arrays ensure fast data throughput.

6. Advanced Cooling Systems

GPU servers require efficient cooling to handle thermal loads.

GPU Server vs AI Workstation

|

Feature |

GPU Server |

AI Workstation |

|

GPUs |

Multiple (4-8+) |

1-4 |

|

Users |

Multiple users |

Single user |

|

Deployment |

Data center |

Local setup |

|

Scalability |

High |

Moderate |

|

Cost |

Enterprise-level |

Lower upfront |

GPU servers are ideal for large-scale operations, while workstations are suited for development and testing.

Use Cases of NVIDIA GPU Servers

Artificial Intelligence and Machine Learning

GPU servers accelerate training of large models, including architectures similar to those used in GPT and LLaMA.

Data Analytics

Processing large datasets efficiently using parallel computation.

Scientific Research

Simulations in physics, chemistry, and genomics.

Rendering and Visualization

Used in animation studios and design industries.

Cloud Computing

Cloud providers such as Amazon Web Services, Google Cloud Platform, and Microsoft Azure rely heavily on GPU servers.

Recommended GPU Server Configurations

Entry-Level GPU Server

Mid-Range GPU Server

Enterprise GPU Server

Future of NVIDIA GPUs and GPU Servers

The evolution of GPU computing continues to accelerate with advancements in architecture and AI optimization.

Emerging innovations include:

New architectures such as NVIDIA Blackwell GPU architecture are expected to redefine performance benchmarks in AI and HPC workloads.

Key Takeaways

NVIDIA GPUs are essential for modern AI and compute workloads

GPU servers enable large-scale parallel processing

Multi-GPU systems significantly outperform traditional servers

Choosing the right configuration depends on workload size

GPU computing is central to future technological advancements

People Also Search For

What is a GPU server

NVIDIA GPU for AI workloads

GPU server vs CPU server

Best GPU for deep learning

GPU server configuration for AI

Frequently Asked Questions About NVIDIA GPUs and GPU Servers

What is a GPU server used for?

A GPU server is used for AI training, data processing, simulation, and high-performance computing tasks.

Why are NVIDIA GPUs preferred for AI?

NVIDIA GPUs support CUDA, which is widely used in AI frameworks like PyTorch and TensorFlow.

How many GPUs can a GPU server have?

Most GPU servers support 4 to 8 GPUs, depending on the system design.

Is a GPU server better than a workstation?

GPU servers are better for large-scale workloads, while workstations are ideal for development and testing.

Can GPU servers be used for cloud computing?

Yes, cloud platforms use GPU servers to deliver scalable compute resources.

Final Thoughts

NVIDIA GPUs and GPU servers have become the backbone of modern computing, enabling breakthroughs in artificial intelligence, data science, and high-performance computing. Whether deployed in workstations or large-scale data centers, these systems provide the performance and scalability required to handle complex workloads efficiently.

Understanding GPU architecture and server configurations helps organizations and professionals make informed decisions when building or scaling their computing infrastructure.

Read More Blogs:-

Ultimate Guide to Computer Workstations

Building a High-Performance GPU Server for AI Workloads

India’s First NVIDIA DGX Spark with MSI EdgeXpert